The Null Device

Posts matching tags 'society'

2016/9/5

Observing political debate, I have noticed a trope that keeps recurring, particularly (these days) on the Right. I'll call it the Gordian Knot Delusion. It says, in essence, “the so-called experts/eggheads/‘intellectuals’ keep going on about how complex things are, but they're liars. When you get down to it, things really are simple.” (There is an implicit “Watch this!” after that, as the speaker purports to bulldoze their way through some issue that namby-pamby liberals and ivory-tower boffins have been wringing their hands ineffectually over, like the two-fisted, lantern-jawed hero of one of those old sci-fi paperbacks the Sad Puppies lament aren't being written any more.) An example of the Gordian Knot Delusion, on that favourite subject of taxes/economics, recently manifested itself in the following tweet from Conservative commentator Daniel Hannan:

It does not need to be pointed out that this is an extremely simplistic argument, more an act of trolling (in its original sense of seeking to provoke a pile-on of responses) than a serious inquiry in good faith, at least, if one assumes that the author is not a simpleton. It achieved its aim, in that others piled on with rebuttals on the same level, along the lines of “if olive oil is made of olives, what is baby oil made of?”. But if one takes the premise beneath it at face value, or at least treats it as something more meaningful than wordplay, the Gordian Knot Delusion comes through. Taxes disincentivise prosperity, it implies, unqualifyingly; cut taxes to the bone and watch prosperity take off like a rocket. And ignore the tweedy, elbow-patched fellow there saying that it's more complicated than that; the man looks like a commie, and is probably after your piece of the pie.If tobacco taxes disincentivise smoking, and petrol taxes disincentivise driving, what do you suppose income taxes do?

— Daniel Hannan (@DanielJHannan) September 4, 2016

The Gordian Knot Delusion, the idea that things are simpler than they are claimed to be, is trotted out by amateur spectators in a lot of fields. Economics is a big one: witness the “common-sense” idea that national economies work like household budgets, with a largely fixed income that is unaffected by the level of spending. By this token, one can believe that deficit spending is inherently irresponsible and austerity is, in itself, good economic housekeeping. (This, of course, falls apart when one considers that economic activity generates wealth, and that savings at rest have no economic impact, but it feels enough like common sense that one can persuade oneself that these objections are sophistry by ivory-tower eggheads, Marxists and moochers.) Ecology and the environment are another area; nobody can see global warming, or when they can, one can believe that the evidence is still out, (or once it isn't, it's too late to do anything so crank your air conditioning up and enjoy the ride); and as for that habitat of endangered newts the hippies are protesting about, let's just drive a motorway through it and see what happens; betcha that everything will be alright. The bees are dying off? Who cares about a buncha dumb bugs! The coral reefs are too? The tabloids say they're not. And if they are, so what?

And then there's modern society in general: gender-neutral job titles and ladies wearing trousers and lactose-free milk in the supermarket, oh my! Your son, who used to be your daughter, is taking medication for ADHD, your other daughter has a girlfriend, your boss wears a nose ring, and the golliwog doll from your childhood is now a potential hate crime. In the good old days, these things didn't exist, or if they did, they were hammered flat like a lump under the rug; people accepted their lot in life, and, as the refrain goes, everything was alright. (One part of this is the myth that these complex conditions, from gluten intolerance to gender dysphoria, don't actually exist, but are made up by an unholy alliance of bureaucrats, drug companies, the liberal media and people who want to feel like special snowflakes; the corollary: were it not for the conspiracy, a sharp clip around the ear would sort them out just as well.)

At its core, the Gordian Knot Delusion is an application of the 80/20 rule to the modern world at large; the belief that complexity is superfluous, and that rather than fretting over it, one should just stride over and cut the knot, deciding that the world is actually simple; witnessing the lack of an immediate catastrophe, one will find one's common sense and derring-do vindicated. (The original Gordian Knot was cut by that gung-ho man of action, Alexander the Great, which is always a flattering comparison.) The other part of the Gordian Knot Delusion is the stab-in-the-back narrative of how the world started to look deceptively complex. As the paranoiac's dictum goes, shit doesn't just happen, but is caused by assholes; in this case, all that talk about how complex things are is the work of a conspiracy; a motley crew of commie traitors, ivory-tower academics, so-called “intellectuals” corrupted by book-learning, miscellaneous perverts, Satanic cultists and out-and-out crooks and thieves out to keep the gravy-train of complexity going, all the better to steal from the simple honest folks. (The trope about climate change being a massive fraud for the purpose of maintaining funding for otherwise worthless research is a classic of the genre.) It is, as conspiracy theories tend to be, a compelling story, especially those who feel themselves bewildered or victimised by the world.

Whilst ostensibly associated with the Right these days, the Gordian Knot Delusion is actually the very antithesis of Edmund Burke's Conservatism, formulated in the wake of that catastrophic leftist severing of this knot, the French Revolution. Burke's argument (framing Conservatism for a world where the divine right of kings was no longer accepted and the University of Chicago School of Economics had yet to come into being and coin its modern analogue, trickle-down economics) was that things are much more complicated than one can comprehend, that bold attempts to destroy ancient injustices are also likely to have countless unintended consequences, and that one should stick to gradual, tentative reforms at best, if not to just give up and learn to live with the world as it is in all its richness and iniquity. Today, one might expect to hear that sort of argument, but only from a hair-shirted greenie warning against tampering with Mother Gaia's blessings. The Robespierres of the Right are all too happy to break things and observe that, on a macro level, everything is alright (whilst circularly classifying those for whom they are not alright as bums and sore losers). These radicals are in alliance with a growing number of people who are anything but radical in temperament, but who have been radicalised by the rapid pace of change, and for whom the idea of turning back the clock to (what in retrospect seems like) a simpler time has appeal. The shift of the Gordian Knot from the Left to the Right could be a result of the increasingly rapid pace of social and technological change.

2014/8/6

An interesting article on the artificial and constructed nature of neoliberalism, the supposedly neutral post-ideological ideology, touted as the mirror of that which is and cannot but be:

The public at large would be hard pressed to know that this strange doctrine of economics actually had a place of origin in the Mont Pèlerin Society, which was the brainchild of Friedrich Hayek. Mirowski tells us that one of the keys to Neoliberalism has always been a sort of “double-truth”, the ability to convey both an outer exoteric version of the truth to the public at large, while at the same time conveying an inward esoteric truth to those in the know: what he terms the Neoliberal Thought Collective.One fundamental contradiction of neoliberalism is that between its assertion of naturalism—the market is the state of nature, to deny it is to fall foul of it, and resistance is useless—and that the specific kinds of market forces, and specific order of society, championed by neoliberalism are anything but natural; in that, neoliberalism diverges from the classical liberalism it purports to be, and becomes a constructivist ideological project; human relations need to be redefined to fit its market-based paradigm, and alternatives need to be bulldozed out of the way, to fit the vested interests of its stakeholders. No alternative must be allowed to challenge this model:

6. Neoliberalism thoroughly revises what it means to be a human person. “Individuals” are merely evanescent projects from a neoliberal perspective. Neoliberalism has consequently become a scale-free Theory of Everything: something as small as a gene or as large as a nation-state is equally engaged in entrepreneurial strategic pursuit of advantage, since the “individual” is no longer a privileged ontological platform. Second, there are no more “classes” in the sense of an older political economy, since every individual is both employer and worker simultaneously; in the limit, every man should be his own business firm or corporation; this has proven a powerful tool for disarming whole swathes of older left discourse. Third, since property is no longer rooted in labor, as in the Lockean tradition, consequently property rights can be readily reengineered and changed to achieve specific political objectives; one observes this in the area of “intellectual property,” or in a development germane to the crisis, ownership of the algorithms that define and trade obscure complex derivatives, and better, to reduce the formal infrastructure of the marketplace itself to a commodity. Indeed, the recent transformation of stock exchanges into profit-seeking IPOs was a critical neoliberal innovation leading up to the crisis. Classical liberals treated “property” as a sacrosanct bulwark against the state; neoliberals do not. Fourth, it destroys the whole tradition of theories of “interests” as possessing empirical grounding in political thought.

7. Neoliberals extol “freedom” as trumping all other virtues; but the definition of freedom is recoded and heavily edited within their framework. It is economic freedom only.Of course, imposing the economic freedom of those with the means to exercise it requires considerable effort, with significant proportions of the economy being employed as guard labour:

12. The neoliberal program ends up vastly expanding incarceration and the carceral sphere in the name of getting the government off our backs.Friedrich Hayek himself, as much as he cautioned against the threat of Communist serfdom (which would be an inevitable consequence of state intervention in the economy), was not a fan of democracy, seeing it at worst as a problem to be mitigated, and at best a placebo button, giving those at the top of the pyramid a veil of legitimacy that, say, the Bourbons and Romanovs didn't have:

Hayek, no friend of democracy, looked upon this closed society of elite intellectuals as if it were a new Platonic Academy. In fact he saw democracy as a hindrance to the neoliberal world view, saying to his friend Bertrand de Jouvenel, “I sometimes wonder whether it is not more than capitalism this strong egalitarian strain (they call it democracy) in America which is so inimical to the growth of a cultural elite.”Meanwhile, it turns out that the neoliberal order is not particularly good for the mental health of those subjected to it, or so argues that perennial Cassandra of the Left, George Monbiot, citing a new book by a Belgian psychoanalyst, Paul Verhaeghe. (No idea whether he identifies as a Marxist psychoanalyst, a peculiar school of thought which, these days, seems to outnumber Marxist economists.)

The market was meant to emancipate us, offering autonomy and freedom. Instead it has delivered atomisation and loneliness. The workplace has been overwhelmed by a mad, Kafkaesque infrastructure of assessments, monitoring, measuring, surveillance and audits, centrally directed and rigidly planned, whose purpose is to reward the winners and punish the losers. It destroys autonomy, enterprise, innovation and loyalty, and breeds frustration, envy and fear. Through a magnificent paradox, it has led to the revival of a grand old Soviet tradition known in Russian as tufta. It means falsification of statistics to meet the diktats of unaccountable power.

These shifts have been accompanied, Verhaeghe writes, by a spectacular rise in certain psychiatric conditions: self-harm, eating disorders, depression and personality disorders. Of the personality disorders, the most common are performance anxiety and social phobia: both of which reflect a fear of other people, who are perceived as both evaluators and competitors – the only roles for society that market fundamentalism admits. Depression and loneliness plague us.And here is a piece by the BBC's esoteric historian, Adam Curtis, about the men who brought Hayek's neoliberalism—and its vector, the pseudo-academic, ideological PR agency known as the think tank—to Britain; a tale also involving legendary pirate radio station Radio Caroline, rock star turned gonzo anti-politician Screaming Lord Sutch, and the small-'l' libertarianism of the Sixeventies:

The Think Tank that Antony Fisher set up was very different. It had no interest in thinking up new ideas because it already knew the "truth". It already had all the ideas it needed laid out in Professor Hayek's books. Its aim instead was to influence public opinion - through promoting those ideas. It was a big shift away from the RAND model - you gave up being the manufacturing dept for ideas and instead became the sales and promotion dept for what Hayek had bluntly called "second-hand ideas".

Reg Calvert was part of an old, unruly tradition of true independence and libertarian freedom. A real bucaneer who would ignore rules and the structure of class and power in Britain while merrily going his own way. Smedley on the other hand was a "privateer" only to the extent that he wanted to bring the private sector back to power in Britain. Other than that he wanted the traditional power structure to remain the same. And to do this he (and his Think Tank) wanted to reinvent the free market as a managed system - managed by them, and any true "privateer" - like Reg - who challenged that power was doomed.If one sees the epoch from the youthquake of the Sixeventies to the triumph of Reagan and Thatcher's free-market ideology and a globalised quasi-feudal corporatism with the levers of power far out of the reach of the populace as one revolution, it could be argued that this was another case of a revolution (in this case, loosely defined as a period of phase transition or instability, in which old certainties come undone and the future is up for grabs) being seized by a well-organised faction with its own Nietzschean will to power. In this case, the Bolsheviks are the interests of concentrated wealth and power, their ability to exercise their power limited by things such as Roosevelt's New Deal, or even the post-Enlightenment doctrines of universal human rights, and the hippies (in the loose sense of the word) are the chumps who kicked off the revolution and then got it taken from them by someone far more devious.

2014/2/20

On occasion of a Women In Rock mini-festival on Melbourne radio station 3CR, Mess+Noise got Ninetynine's Laura Macfarlane and the members of the all-female rock trio Dead River to interview each other:

Laura: Overall I think things with gender equality in music have improved slightly but it still needs more work. There could be more female presence in the technical side of music. For instance there aren’t many female masterers still. It also varies a lot between countries. Ninetynine has played in countries and cities where being a female musician is still a novelty. Those shows always stick out in my memory because usually one female person in the audience will come up and tell you that they really appreciate seeing female musicians. Maybe they were thinking of starting their own band, but hadn’t seen a live band with women in it. It is always special to feel like maybe you have helped encourage other women in some small way.

Laura: Although Ninetynine does not exclusively reference Get Smart, we do like a lot of things people relate to the name, including agent 99. She’s great. We also wanted to use a number as a band name because it can work well in countries where people don’t speak a lot of English. I think the The Shaggs would be my favourite ’60s girl group.

Dead River: Despite plenty of evidence that women are capable and creative masters of their instruments and gear (PJ Harvey, Savages, Kim Gordon, to name a few), there are prevailing paternalistic attitudes that continue to undermine women in music. I’m sure many female musicians can relate to the experience of a male mixer walking on stage and adjusting her amp or telling her how to set her levels. Or being asked if you’re the ”merch girl” or “where’s your acoustic guitar?” after you’ve just lugged an entire drum kit or Orange stack through the door.Meanwhile, the members of Ninetynine have recorded a song to raise funds for protests against the East-West road tunnel, under the name “Tunnel Vision Song Contest”. It sounds like Ninetynine at their most Sonic Youth-influenced, though is a bit light on the Casiotone and chromatic percussion.

2013/12/7

On Smarm, an essay pointing out that the problem with the internet is not snark but its condemnation, and through that, smarm; i.e., emotive appeals to the idea of positivity as a virtue (as if it were motherhood or apple pie or adorable kittens), and condemnation of negativity in general:

Over time, it has become clear that anti-negativity is a worldview of its own, a particular mode of thinking and argument, no matter how evasively or vapidly it chooses to express itself. For a guiding principle of 21st century literary criticism, BuzzFeed's Fitzgerald turned to the moral and intellectual teachings of Walt Disney, in the movie Bambi: "If you can't say something nice, don't say nothing at all."Smarm (whose genesis, in its current form, the article lays at the feet of that one-man Coldplay of letters, Dave Eggers, who exhorted to “not dismiss a movie until you have made one”, singlehandedly reserving the right to engage in, rather than merely consuming, culture for those within the culture industry) may be most obviously evident on the web, in cloyingly snark-free websites like Buzzfeed and Upworthy (the latter of which spawned a satirical webtoy), and the one-sided boosterism of the “like” button, but its effects go beyond the risk of ending up with an overly warmed heart and a jaw needing to be picked up off the floor. As a content-free (and thus outside of the criteria of debate) appeal to a nebulous ideal of civility or niceness (and surely everybody loves niceness, much like kittens and cupcakes), it is a tool for disingenuously shutting down challenging voices, and is very useful for bolstering the status quo when appeals to, say, the divine right of kings or the Hobbesian necessity of there being an ultimate authority, no longer hold water: don't do it because I said so, but do it because kittens.

Smarm hopes to fill the cultural or political or religious void left by the collapse of authority, undermined by modernity and postmodernity. It's not enough anymore to point to God or the Western tradition or the civilized consensus for a definitive value judgment. Yet a person can still gesture in the direction of things that resemble those values, vaguely.As concerns about “civility” and the “tone of debate” and such are raised, the result is often a soupy homogenate of truisms, motherhood statements and content-free manufactured consensus, meeting in the middle and staying there, bathed in a glow of positive sentiment: democratic debate reduced to calming mood lighting. Which undoubtedly serves interests behind the scene just fine.

Here is Obama in 2012, wrapping up a presidential debate performance against Mitt Romney: “I believe that the free enterprise system is the greatest engine of prosperity the world's ever known. I believe in self-reliance and individual initiative and risk-takers being rewarded. But I also believe that everybody should have a fair shot and everybody should do their fair share and everybody should play by the same rules, because that's how our economy is grown. That's how we built the world's greatest middle class.”

The lone identifiable point of ideological distinction between the president and his opponent, in that passage, is the word "but." Everything else is a generic cross-partisan recitation of the indisputable: Free enterprise ... prosperity ... self-reliance ... initiative ... a fair shot ... the world's greatest middle class.And, of course, smarm is useful for ruling out points of view deemed to be inadmissible, on the grounds that they are too negative, or confrontational, or that we have outgrown such petty squabbling about actual issues:

The New York Times reported last month that in 2011, the Obama Administration decided not to nominate Rebecca M. Blank to be the head of the Council of Economic Advisers, because of "something politically dangerous" she had written in the past: In writing about poverty relief, she had used the word "redistribution."

Like every other mode, snark can sometimes be done badly or to bad purposes. Smarm, on the other hand, is never a force for good. A civilization that speaks in smarm is a civilization that has lost its ability to talk about purposes at all. It is a civilization that says "Don't Be Evil," rather than making sure it does not do evil.Topically, we are currently witnessing a tsunami of smarm over the recently deceased Nelson Mandela, as right-wing politicians, many of whom wore HANG MANDELA badges at their Conservative Students meetings or lobbied against sanctions against the apartheid regime, fawningly profess what an inspiration the great man had been to them, with the implication that Mandela was not a freedom fighter but some kind of apolitical, beatific self-help guru, a Princess Diana in Magical Negro form, come to heal us with peace and love. It's ironic to think that, as utterly wrong as Margaret Thatcher was when she denounced Mandela as a terrorist, her view was at least grounded in reality, unlike the insipid words of content-free praise her successors are heaping upon him.

2013/10/20

From an article by Nick Cohen about the current Frieze art fair in London, an observation on the function that huge, tacky-looking artworks fulfil in validating their wealthy purchasers' status; Cohen's argument is that monumental kitsch is a peacock-tail-like mechanism for (expensively and unforgeably) demonstrating that one has status putting one beyond the criticism of one's inferiors:

The justifications from the critics whom galleries always seem able to find to dignify the shallow add to the melancholy spectacle. They talk of challenging our notions of what is art as Duchamp did with his urinal. They forget that Duchamp offered his "fountain" to a New York show in 1917 – almost a century ago, and his once radical ideas are now so established they will soon deserve a telegram from the Queen. "Everything changes except the avant garde," said Paul Valéry. Yet Frieze shows one change, although not a change for the better.

Collectors do not buy Koons because he challenges their definitions of art. The ever-popular explanation that the nouveau riche have no taste strikes me as equally false – there's no reason why the nouveau riche should have better or worse taste than anyone else.

What a buyer of a giant kitten or a gargantuan fried egg says to those who view his purchase is this: "I know you think that I am a stupid rich man who has wasted a fortune on trash. But because I am rich you won't say so and your silence is the best sign I have of my status. I can be wasteful and crass and ridiculous and you dare not confront me, whatever I do."

2013/9/25

Apparently Thailand these days is full of homeless European/American blokes; mostly middle-aged, and often alcoholic, they spend their time drinking and sleeping rough on beaches, which is considerably less idyllic than the big-rock-candy-mountain image the description evokes:

Steve, who declined to give his surname over fears that his long-expired visa could land him in jail, said he has spent two years sleeping rough on Jomtien Beach, a 90-minute drive from Bangkok. “I’ve gone 14 days without food before. I lived off just tea and coffee,” he told The Independent. After his marriage of 33 years ended seven years ago, Steve began regular visits to Thailand before setting up permanently in Pattaya, a seaside resort with a sleazy reputation close to Jomtien. “I’m a bit of a sexaholic,” he says, also admitting a fondness for alcohol.

Paul Garrigan, a long-time Thai resident, isn’t surprised by the growing problem of homeless and stranded Westerners. The 44-year-old spent five years “drinking himself to death” in Thailand before giving up alcohol in 2006 and writing a book called Dead Drunk about his ordeal and the expats who have fallen on hard times in the country. He told The Independent: “I’d been living in Saudi Arabia where I worked a nurse but I’ve been an alcoholic since my teens and, after a holiday to Thailand in 2001, I decided I may as well drink myself to death on a beautiful island in Thailand. Like many people I taught English at a school but spent much of my time on islands such as Ko Samui where I could start drinking early in the morning at not be judged.Meanwhile in the US, some homeless people are apparently surviving on Bitcoin; spending their days in public libraries earning the coins by doing vaguely sketchy online work (watching videos to bump up YouTube counters is mentioned; perhaps armies of the destitute to solve CAPTCHAs, artisanally hand-spam blog comments or otherwise laboriously defeat anti-bot countermeasures could make economic sense in today's climate too) and then cashing out through gift card services. Meanwhile, homelessness charities are embracing Bitcoin:

Meanwhile, Sean’s Outpost has opened something it calls BitHOC, the Bitcoin Homeless Outreach Center, a 1200-square-foot facility that doubles as a storage space and homeless shelter. The lease – and some of the food it houses — is paid in bitcoins through a service called Coinbase. For gas and other supplies, Sean’s Outpost taps Gyft, the giftcard app Jesse Angle and his friends use to purchase pizza.(I suspect that the photo of the homeless man “mining Bitcoins” on the park bench on his laptop is mislabelled; wouldn't all the easily minable Bitcoins have been tapped out, with the computational power required to mine any further Bitcoins essentially amount to already having thousands of dollars of high-end graphics cards lying around and using them to heat your house, rather than something one could do with an old battery-operated laptop on a park bench?)

2013/7/10

An interesting article on cryptolects, secret group languages whose purpose is to conceal meaning from outsiders:

Incomprehension breeds fear. A secret language can be a threat: signifier has no need of signified in order to pack a punch. Hearing a conversation in a language we don’t speak, we wonder whether we’re being mocked. The klezmer-loshn spoken by Jewish musicians allowed them to talk about the families and wedding guests without being overheard. Germanía and Grypsera are prison languages designed to keep information from guards – the first in sixteenth-century Spain, the second in today’s Polish jails. The same logic shows how a secret language need not be the tongue of a minority or an oppressed group: given the right circumstances, even a national language can turn cryptolect. In 1680, as Moroccan troops besieged the short-lived British city of Tangier, Irish soldiers manning the walls resorted to speaking as Gaeilge, in Irish, for fear of being understood by English-born renegades in the Sultan’s armies. To this day, the Irish abroad use the same tactic in discussing what should go unheard, whether bargaining tactics or conversations about taxi-drivers’ haircuts. The same logic lay behind North African slave-masters’ insistence that their charges use the Lingua Franca (a pidgin based on Italian and Spanish and used by traders and slaves in the early modern Mediterranean) so that plots of escape or revolt would not go unheard. A Flemish captive, Emanuel d’Aranda, said that on one slave-galley alone, he heard ‘the Turkish, the Arabian, Lingua Franca, Spanish, French, Dutch, and English’. On his arrival at Algiers, his closest companion was an Icelander. In such a multilingual environment, the Lingua Franca didn’t just serve for giving orders, but as a means of restricting chatter and intrigue between slaves. If the key element of the secret language is that it obscures the understandings of outsiders, a national tongue can serve just as well as an argot.The article goes on to mention polari, which originated as a travelling entertainers' argot and ended up being a cryptolect used by gay men in 20th-century Britain, becoming largely obsolescent after homosexuality was decriminalised, surviving as a piece of period colour in artefacts like Morrissey's song Piccadilly Palare.

With its roots in Yiddish, cant, Romani, and Lingua Franca, Polari was a meeting-place for languages of those who were too often forced to hit the road; groups who, however chatty, tend to remain silent in traditional historical accounts. Today, the spirit of Polari might be said to live on in Pajubá (or Bajubá), a contact language used in Brazil’s LGBT community, which draws its vocabulary from West African languages – testimony to the hybrid, polyvocal processes through which a cryptolect finds voice.Of course, as the whole point of a cryptolect is to conceal meaning, as soon as some helpful soul compiles a crib sheet, they kill that particular version of the language as surely as a butterfly collector with a killing jar. (An example of this that has become a comedic trope is parents, politicians and other grown-ups trying to be hip to the groovy lingo of teenagers and falling flat.)

The work of the chronicler of cryptolect must always end in failure. These are languages which need to do more than keep up with current usage: they have to stay ahead of it, burning bridges where the vernacular has come too close; keeping their distance from the clear, the comprehensible. When Harman returned to the subject of pedlars’ French, his promises of understanding came with a new caveat: ‘as [the canting crew] have begun of late to devise some newe tearmes for certaine things: so will they in time alter this, and devise as evill or worse’. We can’t write working dictionaries of secret languages, any more than we can preserve a childhood or catch a star.Not all cryptolects belong to marginalised, disempowered or nefarious outsider groups (say, itinerant thieves, galley slaves, sexual minorities or minors under the totalitarian regime of parental authority); various technical jargons have something of the cryptolect about them where they avoid using laypersons' terminology in favour of synonymous terms specific to their subcultures. This could be argued to be a good thing, as confusion can occur when words have both technical and vernacular meanings (take for example the word “energy” as used by physicists and New Age mystics). Indeed, whether, say, International Art English is a cryptolect could come down to whether it serves to actually communicate to an in-group or just as a form of ritual display.

2013/7/4

Tom Ellard, formerly of industrial electropop combo Severed Heads and now an academic teaching the digital arts, takes the world of art and the vocation of the Artist to task in an essay titled Five Reasons Why I Am Not An ‘artist’. His targets include the various hierarchies, hypocritical masquerades and rituals enforced on those playing the role of Artist, from refraining from lowering oneself to doing anything too hands-on or technical (there are operators for that) to the politics and carefully circumscribed modes of relating to other people within the art world (a place seemingly as formalised as an 18th-century aristocratic court), to the somewhat less than inspiring reality facing an Artist who has Made It:

When I worked in advertising I was surprised to meet people who didn’t do anything. They are called ‘art directors’. People like myself that perform the actual tasks are called ‘operators’ and there is a strong class distinction which leads ‘art directors’ to cross their arms while speaking near any object that they may accidentally use*. I was employed to move text on a page for an irate person standing a few feet away from the means to do it. Apparently their pureness of thought would be sullied by contact with a mechanism.

I’ve said it too many times: the ideal of an artistic career is inertia. Innovate for a while. Find a practice, a style, a scheme that earns attention. Repeat it endlessly, never daring to step outside your persona because the system will need to bind you to an iconic representation of yourself. Do you reproduce famous paintings as slow motion videos? Or use a skateboard as your macguffin? Better stick to that. Keep on making action painting, or ‘industrial’ tape cut up until you die – which is your prime function, sealing off the quantity of your saleable work.

Artists that constrain themselves are recognised more quickly, they are funded, they are more acceptable to publications because they are easier to digest. They are the cheddar cheese of creativity, and when I am I told that ‘all the best work is happening over here’, I know the place to look is anywhere but there. Innovation is part of a continuing vitality, and confusedly being alive is more important than being neatly dead. We should never ever pre-organise ourselves into categories that fit nicely in museums, journals and repositories. That’s like pinning yourself into a display case.

What will we call ourselves? The Kraftwerk guys were onto something when they called themselves ‘music workers’. But I have another idea. In advertising the term ‘creative’ is a mixed signal, it seems to be a positive, but can be a polite substitute for ‘operator’. I’ve often heard somebody say, ‘we’ll get our creatives onto that’. It means ‘all slaves to the oars’. If so, perhaps we can claim ‘creative’ or ‘operator’ back. It can be our own swearword.

2013/3/24

The Bacon-Wrapped Economy, an article looking at how the rise of a stratum of extremely well-paid engineers and wealthy dot-com founders, mostly in their 20s, has changed the San Francisco Bay Area, economically and culturally:

You don't need to look hard to see the effects of tech money everywhere in the Bay Area. The housing market is the most obvious and immediate: As Rebecca Solnit succinctly put it in a February essay for the London Review of Books, "young people routinely make six-figure salaries, not necessarily beginning with a 1, and they have enormous clout in the housing market." According to a March 11 report by the National Low Income Housing Coalition, four of the ten most expensive housing markets in the country — San Francisco, San Mateo, Santa Clara, and Marin counties — were located in the greater Bay Area. Even Oakland, long considered a cheaper alternative to the city, saw an 11 percent spike in average rent between fiscal year 2011-12 and the previous year; all told, San Francisco and Oakland were the two American cities with the greatest increases in rent. Parts of San Francisco that were previously desolate, dangerous, or both are now home to gleaming office towers, new condos, and well-scrubbed people.The economic effects of gentrification, soaring costs of living and previous generations of residents being priced out are predictable enough (and San Francisco has been suffering from similar effects since the 1990s .com boom, when a famous graffito in one of the city's then seamy neighbourhoods read “artists are the shock troops of gentrification”). And then there are the effects of the city's wealthy elite being replaced by a new crop of the wealthy who, being in their 20s and from the internet world, share little of the aesthetic tastes and cultural assumptions of the traditional plutocracy, favouring street art to oil on canvas and laptop glitch mash-ups to the philharmonic; their clout has sent shockwaves through the philanthropic structures of patronage that supported high culture in the city:

Historically, most arts funding has, of course, come from older people, for the simple reason that they tend to be wealthier. But San Francisco's moneyed generation is now significantly younger than ever before. And the swath of twenties- and thirties-aged guys — they are almost entirely guys — that represents the fattest part of San Francisco's financial bell curve is, by and large, simply not interested.

"If you're talking the symphony or other classical old-man shit, I would say [interest] is very low," an employee at a smallish San Francisco startup recently told me. "The amount of people I know that give a shit about the symphony as opposed to the amount of people I know who would look at a cool stencil on the street ... is really small."And not only the content of philanthropy has changed, but so have the mechanisms. Just handing over money to a museum, without any strings, no longer cuts it to a generation of techies raised on test-driven development and the market-oriented philosophy of Ayn Rand, and believing in fast iteration, continuous feedback and quantifiable results. Consequently, donations to old-fashioned arts institutions have declined with the decline of the old money, but have largely been replaced by the rise of crowdfunding, with measurable results:

(Kickstarter) The self-described "world's largest funding platform for creative projects" has, in its three-year existence, raised more than half a billion dollars for more than 90,000 projects and is getting more popular by the day; at this point, it metes out roughly twice as much money as the National Endowment for the Arts. And though hard statistics are difficult to come by, it's clear that this is a funding model that's taken particular hold in the tech world, even over traditional mechanisms of philanthropy. "Arts patronage is definitely very low," one tech employee said. "But it's like, Kickstarters? Oh, off the map." Which makes sense — Kickstarter is entirely in and of the web, and possibly for that reason, it tends to attract people who are interested in starting and funding projects that are oriented toward DIY and nerd culture. But it represents a tectonic shift in the way we — and more specifically, the local elite, the people with means — relate to art.

"A lot of this is about the difference between consuming culture and supporting culture," a startup-world refugee told me a few weeks ago: If Old Money is investing in season tickets to the symphony and writing checks to the Legion of Honor, New Money is buying ultra-limited-edition indie-rock LPs and contributing to art projects on IndieGoGo in exchange for early prints. And if the old conception of art and philanthropy was about, essentially, building a civilization — about funding institutions without expecting anything in return, simply because they present an inherent, sometimes ineffable, sometimes free market-defying value to society, present and future, because they help us understand ourselves and our world in a way that can occasionally transcend popular opinion— the new one is, for better or for worse, about voting with your dollars.Which suggests the idea of the societal equivalent of the philosopher's zombie, a society radically restructured by a post-Reaganite, market-essentialist worldview, in which all the inefficient, inflexible bits of the old society, from philanthropic foundations in support of a greater Civilisation to senses of civic values and community, have been replaced by the effects of market forces: a world where, if society is assumed to be nothing but the aggregation of huge numbers of self-interested agents interacting in markets, things work as they did before, perhaps more efficiently in a lot of ways, and to the casual observer it looks like a society or a civilisation, only at its core, there's nothing there. Or perhaps there is one supreme value transcending market forces, the value of lulz, an affectation of nihilistic nonchalance for the new no-hierarchy hierarchies.

The article goes on to describe the changes to other things in San Francisco, such as the attire by which the elite identify one another and measure status (the old preppie brands of the East Coast are out, and in their place are luxury denim and “dress pants sweatpants” costing upwards of $100 a pair–a way of looking casual and unaffected, in the classic Californian-dude style, to the outside observer, whilst signalling one's status to those in the know as meticulously as a Brooks Brothers suit would in old Manhattan), the dining scene (which has become more technical and artisanal; third-wave coffee is mentioned) and an economy of internet-disintermediated personal services which has cropped up to tend to the needs of the new masters of the online universe:

And then there are companies like TaskRabbit and Exec, both of which serve as sort of informal, paid marketplaces for personal assistant-style tasks like laundry, grocery shopping, and household chores. (Workers who use TaskRabbit bid on projects in a race-to-the-bottom model, while Execs are paid a uniform $20 per hour, regardless of the work.) According to Molly Rabinowitz, a San Franciscan in her early twenties who briefly made a living doing this kind of work — though she declined to reveal which service she used — many tech companies give their employees a set amount of credit for these tasks a month or year, and that's in addition to the people using the services privately. "There's no way this would exist without tech," she said. "No way." At one point, Rabinowitz was hired for several hours by a pair of young Googlers to launder and iron their clothes while they worked from home. ("It was ridiculous. They didn't want to iron anything, but they wanted everything, including their T-shirts, to be ironed.") Another user had her buy 3,000 cans of Diet Coke and stack them in a pyramid in the lobby of a startup "because they thought it would be fun and quirky." Including labor, gas, and the cost of the actual soda, Rabinowitz estimated the entire project must have cost at least several hundred dollars. "It's like ... you don't care," she said. "It doesn't mean anything because it's not your money. Or there's just so much money that it doesn't matter what you spend it on."

2013/2/4

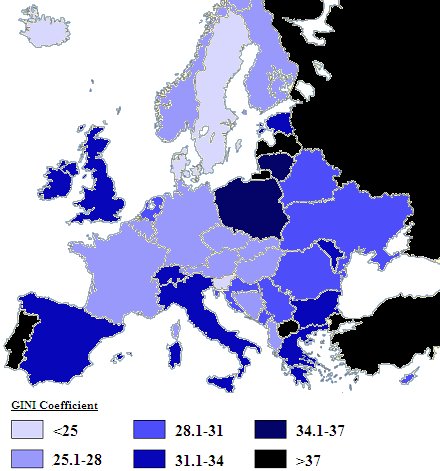

The economic difference between London, a global centre of finance, where wealth is conjured into being and every Russian oligarch and Saudi princeling worth his salt has to have a pied à terre, and the rest of Britain is drawn into sharp relief by a recent property value survey:

Research shows that the net value of properties in just 10 London boroughs – Westminster, Kensington & Chelsea, Wandsworth, Barnet, Camden, Richmond, Ealing, Bromley, Hammersmith & Fulham and Lambeth – now outstrips the worth of all the properties in Wales, Northern Ireland and Scotland combined.

The capital’s richest borough, Westminster, with 121,600 dwellings, is worth £95bn – more than twice the value of Edinburgh (pop 500,000) and three times that of England’s sixth most populous city, Bristol.That's one thing one forgets about living in London: that this isn't normal. The high property values (and rents), the billions of pounds poured into public transport, the presence of everything from world-class art exhibitions to lunch options more interesting than a supermarket sandwich: none of this would be here were London not a global city-state of its stature, alongside the Singapores and Dubais of this world.

Of course, the downside of this is that London is considerably less affordable for those who aren't oligarchs, princelings or otherwise loaded.

2012/10/28

Another consequence of the Zuckerberg Doctrine, the belief that every person has one and only one identity which they use for all online social interactions: doctors in Britain are reporting an increase in infatuated patients pursuing them romantically via Facebook:

Figures compiled by the Medical Defence Union (MDU) show that the number of cases of doctors seeking its help because they are being pursued by a lovestruck patient rose from 73 in 2002-06 to 100 in 2007-11. Patients are increasingly using social media rather than letters or flowers to make their feelings clear, such as following a doctor on Twitter, "poking" them on Facebook or flirting with them online.

A female GP was asked out for a drink by a male patient as she left her surgery. When she declined, he began to pester her via Facebook and sent her a bunch of lilies, which she had listed as her favourite flowers on her Facebook page. On MDU advice, she changed her security and privacy settings on the site so that only chosen friends could view her postings.Of course, it is unreasonable to ask doctors (and, indeed, other public-facing professionals; teachers, police, social workers and legal aid workers come to mind) to delete their Facebook accounts and not use social software. For one, in this day and age, disconnecting from social software means virtual exile; Facebook refuseniks find themselves out of the loop, relying on the charity of friends with Facebook accounts and free time to keep them informed of everything from party invitations to when mutual friends friends had a baby, got divorced or moved abroad. And then there is the increasing public expectation that well-adjusted citizens have a Facebook profile, and one with normal activity patterns. Already there is talk about governments requiring citizens to log in with Facebook/Google identities to access services, so a normal Facebook record, with the requisite casual-though-not-debauched photos and history of social chatter is increasingly starting to look like a badge of good citizenship, well-adjustedness and general non-terroristicity. And having two accounts, one for your professional persona, and one for your personal life, is expressly verboten by orders of Mark Zuckerberg and Vic Gundotra, as mandated by the advertisers who demand accurate records of eyeballs sent their way and the shareholders who demand steady advertising revenue.

So now, by the immutable facts of neoliberal capitalism in the internet age, we have a world where people have only one face they present to the world, one with their wallet name, career record, list of friends and social activity attached. This face is visible to everyone from old friends to employers to any members of the public one has a professional duty of care to. Perhaps there's a Californian jeans-and-T-shirts casualness to forcibly unifying these facets; to not allowing a distinction between the uniform of professionalism one wears in one's career and the accoutrements of one's casual, personal life; to knowing that your doctor's favourite flower is the lily, your geography teacher was in a moderately well-known math-rock band, or the police officer you reported your lost phone to is an Arsenal fan and known to his mates as Beans; though the downside of the casualisation of professional life is the professionalisation of casual life, a sort of Bay Area take on superlegitimacy. And while in Britain today, that may take the form of doctors self-censoring to avoid the possibility of obsessive patients, in parts of the US, where employers can fire workers for their political or personal views, sexual orientation or even sporting loyalties, the stakes are higher.

Whether the Zuckerberg Doctrine is the inescapable future, in which everyone is coerced into an endless, joyless social game of simulating a model citizen as if under the watchful eyes of an outsourced Stasi, however, is another question. Facebook's unquestionable hegemony is starting to show its first cracks. For now, it remains the default grapevine, the standard channel of social chatter; however, its declining share price seems to be pushing Facebook to more agressively monetise the relationships of its nominally captive audience, pushing more ads and sponsored stories, asking users to pay for their messages to be seen by their friends (whose feeds can only contain so many updates, after all, and there are commercial sponsors to compete with), and, the implication goes, throttling back how much unsponsored chatter a user sees. As this ratchets up, eventually people will notice that their friends' announcements and photos aren't making it to them but instead the fact that their friend ostensibly likes Toyota or Red Bull is and start tuning out. Then Facebook will decline, as MySpace and Friendster did before it, and something else will take its place.

Perhaps the best thing to hope for is that whatever fills the niche occupied by Facebook will be not so much a service but a decentralised system of independent services, each free to set its own terms and policies. They could be based on a protocol such as Tent or Diaspora*, and, as the servers interact, allow for great diversity; some servers will be free to use but spam your eyeballs with ads until they bleed, others will charge, say, $25 a year and offer ad-free unlimited hosting; some will have Zuckerbergian wallet-name policies, others will allow users to choose the pseudonyms of their choice (as, say, LiveJournal did back in the day, and community-oriented web forums often do), with some uptight silos only federating with others with wallet-name policies, and being seen by those outside of those as terminally square. And, of course, unlike on Facebook, there will be nothing stopping someone from having multiple accounts. Of course, there will be nothing preventing people from running their own silos, though any system which depends on people doing this will become a ghetto of deep geeks with UNIX beards who enjoy setting up such systems, to the exclusion of everyone else.

2012/10/1

An article in the Guardian presents a scenario on the privacy risks even the most careful social media output could pose when analysed with data-mining software descended from that currently in existence:

"Tina Porter, 26. She's what you need for the transpacific trade issues you just mentioned, Alan. Her dissertation speaks for itself, she even learned Korean..." He pauses.Of course, if employers (and health insurance companies and the police and organised criminals and advertising firms and psychotic stalkers) can data-mine a tendency to get migraines from the fluctuation of the vocabulary of one's posts, one might suggest that those with a healthy amount of paranoia should avoid social media altogether, beyond having a simple static page that gives away absolutely nothing. Except that not having an active social media profile is increasingly seen as suspicious in itself; if you're not tweeting your TV viewing or Instagramming your sandwiches and leaving a statistically normal trail of well-adjusted narcissistic exhibitionism, there's a nonzero probability that you might be the next Unabomber; and, in any case, the HR department who knocked Tina Porter back for her carefully concealed migraines would certainly not even look at the CV of the potential ticking timebomb whose online profile draws a blank.

"But?..." Asks the HR guy.

"She's afflicted with acute migraine. It occurs at least a couple of times a month. She's good at concealing it, but our data shows it could be a problem," Chen says.

"How the hell do you know that?"

"Well, she falls into this particular Health Cluster. In her Facebook babbling, she sometimes refers to a spike in her olfactory sensitivity – a known precursor to a migraine crisis. In addition, each time, for a period of several days, we see a slight drop in the number of words she uses in her posts, her vocabulary shrinks a bit, and her tweets, usually sharp, become less frequent and more nebulous. That's an obvious pattern for people suffering from serious migraine. In addition, the Zeo Sleeping Manager website and the stress management site HeartMath – both now connected with Facebook – suggest she suffers from insomnia. In other words, Alan, we think you can't take Ms Porter in the firm. Our Predictive Workforce Expenditure Model shows that she will cost you at least 15% more in lost productivity."

So, if this sort of thing comes to pass (and whether that sort of data could be extracted from social data with few enough false positives to be useful is a big if), we may eventually see an age of radical transparency, where everyone knows who's likely to be marginally more or less productive, along with possible laws regulating when this may be taken into account. Either that or the evolution of Gattaca-style systems and techniques for chaffing one's social data trail and masking any deficiencies which it may betray, in an ever-escalating arms race with new analytical techniques designed to detect such gaming.

2012/9/17

The Observer has an article about the phenomenon of “friend clutter” on social network services; in short: while it's easy to “friend” people, removing someone from one's circle of acquaintance is inherently a hostile act; there is no cultural provision for severing notional ties with people one has no actual ties with on a no-fault basis. (At least, this is the case in England, where making a scene is something impetuous foreigners do, and Just Not Done; it'd be interesting to see whether people are quicker to sever online acquaintances in more brusque locales—say, Berlin, Moscow or Tel Aviv) And hence, we end up with friend lists full of strangers:

Even "unfriending" someone on Facebook, the closest equivalent to Bierce's proposal, feels like delivering a slap in the face (and not even a well-timed slap, since you can't be sure when they'll find out). Facebook itself hates unfriending, for commercial reasons, and thus makes it easy to hide updates from tiresome contacts without their knowing – a deeply unsatisfactory arrangement that leaves you at constant risk of meeting someone face-to-face who assumes you must already know they've got engaged, or had another baby, or been dumped, or fired, or widowed.

If that sounds a heartless way to think about other people, consider the parallels. Physical clutter, as a widespread problem, is only as old as modern consumerism: before the availability of cheap gadgets, clothes and self-assembly furniture, it wasn't an option for most people to accumulate basements full of unwanted exercise bikes, games consoles or broken Ikea bookshelves. We think we want this stuff, but, once it becomes clutter, it exerts a subtle psychological tug. It weighs us down. The notion of purging it begins to strike as us appealing, and dumping all the crap into bin bags feels like a liberation. "Friend clutter", likewise, accumulates because it's effortless to accumulate it: before the internet, the only bonds you'd retain were the ones you actively cultivated, by travel or letter-writing or phone calls, or those with the handful of people you saw every day. Friend clutter exerts a similar psychological pull. The difference, as Bierce understood, comes with the decluttering part: exercise bikes and PlayStations don't get offended when you get rid of them. People do. So we let the clutter accumulate.And while the psychological impact of severing a friendship (even one that only exists as a row in a database, in which neither party remembers who the other actually is) can be mildly traumatic (there have been neurological studies that showed that social/romantic rejection stimulates the same parts of the brain as physical pain; I wouldn't be surprised if awareness of a severed connection worked similarly), another factor is the business models of social software services, such as Facebook, whose balance sheet depends on as many people as possible seeing which brands other people they “know” in some sense or other liked, hence another layer of polite hypocrisy is invented: the hidden, passive “friendship”, in which one doesn't have to see anything about the life of one's notional acquaintance, but can avoid the minor agony of forever writing them out of one's life. (And unfriending, it goes without saying, is forever, or at least without a damned good apology.)

The more profound truth behind friend clutter may be that, as a general rule, we don't handle endings well. "Our culture seems to applaud the spirit, promise and gumption of beginnings," writes the sociologist Sara Lawrence-Lightfoot in her absorbing new book, Exit: The Endings That Set Us Free, whereas "our exits are often ignored or invisible". We celebrate the new – marriages, homes, work projects – but "there is little appreciation or applause when we decide (or it is decided for us) that it's time to move on". We need "a language for leave-taking", Lawrence-Lightfoot argues, and not just for funerals. A terminated friendship, after all, needn't necessarily signal a horrifying defeat, to be expunged from memory. One might just as easily think of it as "completed".

Mullany recommends a friend-decluttering exercise that she admits sounds "weird", but that she predicts will become more and more widely accepted. She advises making a public proclamation on Facebook in which you specify the criteria by which you'll henceforth be defining people as "friends". Maybe you'll resolve only to remain Facebook friends with people you've met at least once in real life, or maybe you'll use a stricter standard, such as whether you'd invite that person to your wedding. Explain, in the same proclamation, that the consequent defriending shouldn't be taken personally, and that you're doing it to a number of people at once. Then start clearing out the clutter.

If Zuckerberg's insistence that everyone should be friends with everyone prompts us, out of necessity, to winnow our lists to a smaller group of people we truly cherish, he'll have done something admirable, even if it's the opposite of what he intended.Indeed; though whether it prompts people to circle the wagons and insist on only remaining attached to people they have met recently or would go for a drink with is another question. Part of the utility of services like Facebook and whatever succeeds it would be to keep in low-level ambient contact with people whom one is not friends with in the classic sense of friendship: old school buddies, ex-coworkers, people one met a few times some years ago, and so on. Of course, the amount of attention these people might have for one is probably somewhat limited, so updates would be limited to the major things: changes of location, marital status, sex, and that sort of thing. Which strikes me as quite distinct from the interactions one has with one's active online friends: the stream of updates about one's life peppered with amusing links, usually involving cats.

2012/9/1

New York is facing an onslaught of middle-aged subway taggers; latchkey kids from the 1970s and 1980s who never put graffiti vandalism behind them, or else who decided to recapture their lost youth, spurred on by one thing or another to pick up their spraycans and get back into the game:

In torn jeans and saddled with a black backpack, Andrew Witten glances up and down the street for police. The 51-year-old then whips out a black marker scribbles "Zephyr" on a wall covered with movie posters. He admires his work for a few seconds before his tattooed arms reach for his daughter, holding her hand as he briskly walks away.

Witten's brush with fame now often comes with his freelance art writing and his sporadic visits to his daughter's school, where he teaches her classmates how to draw. Lulu knows her father draws "crazy art," a term she picked up from seeing graffiti on trains.

For decades, Ortiz, 45, has been known on Manhattan's Lower East Side as LA II. A traumatic loss of a girlfriend brought him out of a 14-year hiatus from graffiti writing. He has since been caught three times spraying his tag on property, each time while walking a friend's dog. "Everywhere that dog stopped to pee I would write my name," Ortiz says. "The streets were like my canvases. I just started writing my name everywhere."Alternatively, it could be argued that he and his dog bonded by participating in territory-marking activities together.

2012/8/27

There's a piece in the Guardian about the rise of crowdfunding, and how it shifted from a medium for art projects to a means of decentralised organisation of practical endeavours requiring money, and turned into a means for circumventing market failures:

Kickstarter itself is changing under the influence of digital culture. At first it was about making established forms of art. Film was big – documentaries about organic community vegetable gardens were not uncommon. Now that is changing. It is becoming a land of gadget makers and gamers.

This new communal instinct can do amazing things like route around the warping influence of capitalism and digital platform wars. Look at projects like Open Trip Planner. This takes a bit of unravelling but basically the benefit of good maps on smartphones became endangered by Apple's titanic battle for market supremacy with Google. Apple are attempting to strip Google products like maps from iPhones and this left users with crappy transport info – Open Trip Planner is the communal answer to a hierarchical fall out.The article mentions OpenTripPlanner, an open-source alternative to trip planning systems which seems to be doing for trip planning what OpenStreetMap did for geodata, and the Pebble watch, a Bluetooth-enabled smart watch designed without the backing of a large electronics corporation, and the fact that Kickstarter is expanding to the UK.

In other crowdfunding news, Matthew Inman, who runs The Oatmeal web comic, recently launched a crowdfunding campaign to raise US$850,000 buy Nikola Tesla's old laboratory (put on the market by AGFA and expected to be bought by property developers); the campaign met its target in under a week and has since raised over a million dollars.

2012/8/25

Wary of the possibility that a population of educated, frustrated women could result in pressure for political liberalisation, Iran's government has moved to preempt this by declared 70 university courses to be for men only:

It follows years in which Iranian women students have outperformed men, a trend at odds with the traditional male-dominated outlook of the country's religious leaders. Women outnumbered men by three to two in passing this year's university entrance exam.

Senior clerics in Iran's theocratic regime have become concerned about the social side-effects of rising educational standards among women, including declining birth and marriage rates.Of course, if women outperform men in academic fields, banning women may make the men feel better about their performance, but it will be less salutary for Iran's economy if half of all potential knowledge workers are prohibited by law from developing their potential.

This is at odds with the arguably more progressive Saudi approach (and "progressive" and "Saudi" aren't two words I expected to write adjacent to each other) of planning segregated women-only cities, where the nation's educated, otherwise frustrated women can work in industry on a “separate but equal” basis. (I wonder how long that will last; eventually, I imagine it'll lead to those invested in the status quo deciding that it's a threat and attacking it; starving it of resources, imposing crippling restrictions on it, and eventually shutting it down and sending the women back to the authority of their male family members, and the city will go the way the the USSR's Jewish Autonomous Oblast did once Stalin found it too threatening.)

And Iran is moving to further remind women of their place under an Islamic theocracy, by moving to legalise the marriage of girls under 10. The current age at which girls can be married in the Islamic Republic is 10, down from 16 before the revolution.

2012/6/13

Researchers in the US have been investigating the question of what is “cool” from a psychological perspective, hitting the dichotomy between the two opposite poles which can be described with this term: on one hand, agreeability and popularity, and, on the other hand, a vaguely antisocial countercultural/oppositional stance reflected in the classic iconography of rebels and outlaws from the history of cool:

"I got my first sunglasses when I was about 13," said Dar-Nimrod. "There wasn't a cooler kid on the block for the next few days. I was looking cool because I was distant from people. My emotions were not something they could read. I put a filter between me and everyone else. That, in my mind, made me cool. Today, that doesn't seem to be supported. If anything, sociability is considered to be cool, being nice is considered to be cool. And in an oxymoron, being passionate is considered to be cool—at least, it is part of the dominant perception of what coolness is. How can you combine the idea of cool—emotionally controlled and distant—with passionate?"

"We have a kind of a schizophrenic coolness concept in our mind," Dar-Nimrod said. "Almost any one of us will be cool in some people's eyes, which suggests the idiosyncratic way coolness is evaluated. But some will be judged as cool in many people's eyes, which suggests there is a core valuation to coolness, and today that does not seem to be the historical nature of cool. We suggest there is some transition from the countercultural cool to a generic version of it's good and I like it. But this transition is by no way completed."The researchers claim that the concept of “cool” is mutating away from the oppositional/rebellious sense and towards straight agreeability.

If this phenomenon does bear itself out, there may be a number of possible explanations. Perhaps, as the countercultural struggles against the repressive hegemony of the “squares” have receded into folk memory of The Fifties and everyone wears jeans, listens to rock and has smoked a joint at least once in their lives, the idea of the rebel is left with even less of a cause than before Perhaps the shift in the meaning of “cool” has something to do with the ongoing process of commodification of the counterculture, with the sneers and icy glares of vintage cool now being little more than a mask for agreeable dudes to put on when the occasion suits. Or perhaps, in the information age, being agreeable and well-connected confers a greater advantage than being tough and detached. One would imagine that this would be the case in most normal situations, in which case, the old world of tough guys and strong, silent types would have been an anomalous case, a hostile environment which traumatised its inhabitants into growing expensive carapaces of character armour.

Another option would be that the meaning of “cool” is not, in fact, changing (this study doesn't seem to involve surveys done decades earlier to gauge what people thought at the time, and compares living attitudes with canned stereotypes), and that the word “cool” has several meanings; when it's used as a term of approval for a person, it has always indicated agreeability, whereas when talking about fictional characters, it suggested a certain type of antiheroic asshole.

2012/6/1

2012/5/6

In the UK, nightclub bouncers are requiring punters to show them their Facebook profiles on their phones as a condition of entry, ostensibly to weed out the underage. Civil liberties groups and a door staff training firm claim that this is illegal, while some bar owners and bouncers defend the practice, citing heavy fines levied in the event of staff accidentally letting in a minor with a fake ID.

2012/4/18

A modest proposal from security commentator Alec Muffett: Could we replace marriage with incorporation of private limited companies?

in the UK the biggest hurdle would be IR35 and certain aspects of expense policy, but you’d have a contract between the directors, clear dissolution clauses; all income could be directed to the corporation – taxed differently and offset against OPEX like nappies and so forth – and salaries paid out, plus it would own and depreciate shared assets like houses and cars. You could avoid much income tax by taking dividends – I know tax avoidance is infra dig at the moment, but I’m thinking of the future here – and you could offshore your marriage for better breaks.

But there would be no gender issues – no incorporation is between a man and woman – plus you could have more than one company director, have corporations that outlast their founders, and in extreme circumstances outsource large chunks of the whole enterprise.

2012/4/15

A new study in the US has found a positive correlation between the number of big-box retail stores in an area and the number of hate groups in that area; the study used Wal-Mart as a proxy for big-box retailers:

The amount of Wal-Mart stores in a county was more statistically significant than other factors commonly regarded as important to hate group participation, such as the unemployment rate, high crime rates and low education, the research found.

"Wal-Mart has clearly done good things in these communities, especially in terms of lowering prices," said Stephan Goetz, a Penn State University professor who also serves as the director of the Northeast Regional Center for Rural Development. "But there may be indirect costs that are not as obvious as other effects."It is speculated that the correlation may be due to the fraying of the social ties that exist in areas with smaller, less impersonal, shops. Whether Wal-Mart's owners' politics (generally well on the right of the Republican party) have anything to do with the correlation is unclear.

2012/3/4

Psychologist Bruce Levine makes the claim that, in the US, the psychological profession has a bias towards conformism and authoritarianism, and against anti-authoritarian tendencies. This bias apparently results from the institutional structure of the profession, which selects for and reinforces pro-conformist and pro-authoritarian tendencies, and manifests itself, among other things, in those who exhibit “anti-authoritarian tendencies” being caught, diagnosed with various mental illnesses and medicated into compliance before they can develop into actual troublemakers:

In my career as a psychologist, I have talked with hundreds of people previously diagnosed by other professionals with oppositional defiant disorder, attention deficit hyperactive disorder, anxiety disorder and other psychiatric illnesses, and I am struck by (1) how many of those diagnosed are essentially anti-authoritarians, and (2) how those professionals who have diagnosed them are not.

Anti-authoritarians question whether an authority is a legitimate one before taking that authority seriously. Evaluating the legitimacy of authorities includes assessing whether or not authorities actually know what they are talking about, are honest, and care about those people who are respecting their authority. And when anti-authoritarians assess an authority to be illegitimate, they challenge and resist that authority—sometimes aggressively and sometimes passive-aggressively, sometimes wisely and sometimes not.

Some activists lament how few anti-authoritarians there appear to be in the United States. One reason could be that many natural anti-authoritarians are now psychopathologized and medicated before they achieve political consciousness of society’s most oppressive authorities.Showing hostility to or resentment of authority will get one diagnosed with various conditions, such as “opposition defiant disorder (ODD)”, a condition which manifests itself in deficits in “rule-governed behaviour”, and for which, as for many parts of the human condition, there are many types of corrective medication these days. (Compare this to the condition of “sluggish schizophrenia”, which only existed in the Soviet Union and manifested itself as a rejection of the self-evident truth of Marxism-Leninism.)

While pretty much every hierarchical society has mechanisms for encouraging conformity to some degree, Dr. Levine's contention is that the increase in psychiatric medication in recent years may be leading to a more authoritarian and conformistic society.

2012/1/29

Idea of the day: the Happy Recession; the idea that the internet, through driving prices and costs down, will permanently deflate both prices and wages; the post-internet world, it seems, jams econo:

The most pernicious aspect of Internet entertainment is that it’s so easy to measure and so easy to mass-produce. So the moment something on the Internet gets fun enough to be competitive with the real-world analogue, it starts getting relentlessly improved until it’s vastly superior. World of Warcraft soaks up upwards of forty hours per week from serious fans, who pay about $15 per month for their subscriptions. Few other hobbies can consume so much time at such a low cost.

The web makes it easier to access non-traditional employees at much lower salaries. As we argued in our Demand Media analysis, the real story here is that a stay-at-home mom with a Masters in Journalism can write content that is good enough compared to a typical Madison Avenue copywriter, especially when the rate is $15 per article instead of six figures per year. This disaggregation of writing skill means that companies no longer have to hire good writers in order to write 5% good copy and 95% mediocre work; they can outsource the mediocre stuff and relegate the high-end work to a short-term freelancer.

The web offers cheap social status: In the long term, this may have a bigger effect than the web merely making digitizable products cheaper. Social status games drive a huge amount of economic activity: people strive to get into high-paying, high prestige career tracks, to win promotions and attendant raises, to live in the best neighborhoods and send their kids to the best schools. Few status games lack some kind of economic output—people who play sports well below the professional level still get some job opportunities out of it.One could probably also add a geographical factor to this: in the age of cheap, ubiquitous opportunity, access to economic and cultural opportunities is less dependant on being located in a buzzing metropolis or creative-class hive; after all, if a copywriter or app developer can work from anywhere with creativity, things like music and art scenes (or whatever replaces the post-punk rock'n'roll era construction of the "music scene" in the cultural ecosystem) are centred around blogs rather than physical venues, and one doesn't even need to move to a different place to find like-minded people, there would be less competition for living in more desirable areas, when the price of not doing so no longer includes disconnection from as many opportunities.

2012/1/24

The Bleeding Obvious: A sociological study from Australia has showed that people who fly national flags on their cars are more likely to harbour xenophobic attitudes, with both exclusionary views of who belongs in their society and hostility to those outside of the circle:

Professor Fozdar said 43 per cent of those with car flags said they believed the White Australia Policy had saved Australia from many problems experienced by other countries, while only 25 per cent without flags agreed.

A total of 55 per cent believed migrants should leave their old ways behind, compared with 30 per cent of those without flags.

"Very clear statistical differences in attitudes to diversity between those who fly car flags and those who don't, show that flag waving − while not inherently exclusionary – is linked in this instance to negative attitudes about those who do not fit the 'mainstream' stereotype'," she said.The study also revealed more young people flying flags than older people; perhaps a sign that a more liberal older generation who grew up in the wake of the cultural struggles of the Sixeventies and the Whitlam-era progressive consensus is being supplanted by the children of Howard, Hanson and Hillsong, whose views on what belongs in Australia are a lot narrower?

The study was done in Australia, surveying people flying the Australian flag on their cars in the run-up to Australia Day, though I imagine they'd find similar findings on people flying the flag in other circumstances (such as wearing it as a cape at Big Day Out), or in other countries (I imagine those flying the Cross of St. George in England are more likely to vote UKIP, have uttered the phrase "bloody Pakis" at some point in their lives, to have an aversion to eating "foreign muck", and complain about foreigners coming here and stealing our jobs and not working").

2011/12/31

A few random odds and ends which, for one reason or another, didn't make it into blog posts in 2011:

- Artificial intelligence pioneer John McCarthy died this year; though before he did, he wrote up a piece on the sustainability of progress. The gist of it is that he contended that progress is both sustainable and desirable, for at least the next billion years, with resource limitations being largely illusory.

- As China's economy grows, dishonest entrepreneurs are coming up with increasingly novel and bizarre ways of adulterating food:

In May, a Shanghai woman who had left uncooked pork on her kitchen table woke up in the middle of the night and noticed that the meat was emitting a blue light, like something out of a science fiction movie. Experts pointed to phosphorescent bacteria, blamed for another case of glow-in-the-dark pork last year. Farmers in eastern Jiangsu province complained to state media last month that their watermelons had exploded "like landmines" after they mistakenly applied too much growth hormone in hopes of increasing their size.

Until recently, directions were circulating on the Internet about how to make fake eggs out of a gelatinous compound comprised mostly of sodium alginate, which is then poured into a shell made out of calcium carbonate. Companies marketing the kits promised that you could make a fake egg for one-quarter the price of a real one.

- The street finds its own uses for things, and places develop local specialisations and industries: the Romanian town of Râmnicu Vâlcea has become a global centre of expertise in online scams, with industries arising to bilk the world's endless supply of marks, and to keep the successful scammers in luxury goods: